This year on Groundhog Day, February 2, Punxsutawney Phil, the senior marmot weathercaster in the U.S., predicted an early spring. Staten Island Chuck agreed. We didn’t spot our resident representative of his ilk, Chuck Lamberti, but we had an overcast sky that day so I doubt he’d have seen his shadow. I’ll therefore hazard a guess that he would have voted for a quick end to winter.

This year on Groundhog Day, February 2, Punxsutawney Phil, the senior marmot weathercaster in the U.S., predicted an early spring. Staten Island Chuck agreed. We didn’t spot our resident representative of his ilk, Chuck Lamberti, but we had an overcast sky that day so I doubt he’d have seen his shadow. I’ll therefore hazard a guess that he would have voted for a quick end to winter.

However, though we had some warmer stretches between early February and late March, the days didn’t really get warmer till the last week of March, and the nights remain in the low 30s. On the last day of March I spent an hour or so doing garden cleanup in my shirtsleeves, then sat awhile with the sun warming my back. Felt good to do some physical work again after a long sedentary winter. From now on I will do that on any day when the weather permits.

Then on April 4 we had a light, wet snow … so it’s back and forth.

At the same time, those varmints all had it right. Not only did the nation have the warmest winter in U.S. history, but I had the warmest winter in my history of living in the U.S. northeast. Definite climate change around these parts.

Among other consequences, this meant that we had only one or two notable snowstorms, which our new roofing handled easily, and our August pre-buy of fuel oil and propane has timed out just right. Improved with a heat reclaimer, as discussed earlier, our living-room fireplace worked more effectively, consumed a cord or two of our own homegrown wood, and kept the north end less chilly.

Beyond that, having a hearth in our home, with real flames dancing in it and the slight smell of burning wood in the air, creates a psychological affect that likely comes hardwired, going back to the caves. Fun fact: the word focus comes from the Latin foci, which means hearth. Which, in homes pre-gaslight and pre-electricity, offered the highest illumination — and thus the most immediately available visual data — in the living space once darkness fell.

•

Local fauna update: We think we spotted a bobcat strolling up our driveway.

•

Here Comes the Sun

Just in time for the eclipse, we’ve gone fully solar. Through a program conducted by New York State Solar Farm (NYSSF) we now have an array of solar panels on the southern and western sides of our roof. These are the same panels that power the space station.

Our solar controls

The installers designed our setup so that, based on our past history of electrical consumption, this array should provide us with all the juice we need plus a 10 percent surplus. This generated power feeds into the grid, from which we continue to draw. Optimally, if we have a typically bright and sunny six months going forward from here, by late fall/early winter we will have fulfilled our own day-to-day energy consumption while also banking enough kwh to see us through the winter. Eco-friendly green energy independence.

Financed through a no-interest short-term loan plus a low-interest long-term loan, this should pay for itself in 15 years. (The system is warranted to last for 25 years, and could endure much longer.) Meanwhile, we will save about $2400 annually on our current electric bills from Central Hudson, our provider. (The package comes complete with several tax rebates. Given our modest income, we’ll have to see to what extent that benefits us when we get to next year’s filings.)

The apps that our new system provides — online via my desktop Mac Mini and on my Apple iPhone — allow real-time monitoring of the system … all quite amazing.

21 Lamberti solar array 2024

•

Now we have underway a cluster of free improvements to our main house, delivered through the Weatherization Assistance Program (WAP), for which our income qualifies us. Underwritten by the New York State Energy Research and Development Authority (NYSERDA), administered by Ulster County Community Action Committee (UCCAC), this will make us much more energy-efficient in ways both large and small. These include:

- Air-sealing of attic to prevent drafts, rodent intrusion, etc.

- New attic insulation (6″-16″ blown fiberglass) to cover old attic insulation (rolled fiberglass). Creation of walkway/storage area down the middle of the space.

- Removal of old insulation (rolled fiberglass) under cantilevered addition to house (my office).

- New insulation under addition (2″ closed-cell polyurethane spray foam).

- Repair damaged sheetrock in ceiling and walls of addition.

- Install 4 new thermal windows to replace old single-pane windows on main floor.

- New vent fans with humidistats (humidity sensors) in two bathrooms.

- New vent fan with humidistat in main room of basement.

- Sheetrock repair in basement laundry center.

- Weatherstripping and draft-proofing of various doors.

- New LED bubs as needed.

- Various other small fixes.

21 Lamberti Lane, attic insulation

•

Beyond these upgrades, our budget for 2024 (absent any unexpected windfalls) allows for only modest and minor improvements. Highest on the list we position those that we can do ourselves, preferably with tools and materials already on hand, or available free/cheap: recycled, secondhand, scavenged, improvised.

As the weather gets warmer we have gardening on our mind, of course. We have established plantings, seeds aplenty, cuttings that (we hope) survived and rooted over the winter, and plans for a greenhouse.

•

Rendering Unto Caesar

We had our taxes for 2023 done — for free — by local volunteers in the AARP TaxAide program. Seniors helping seniors. Normally I do my own taxes, and Anna hers, separately. But this year we needed to file jointly, for the first time, in order to navigate the one-time tax situation of the sale of our Staten Island house. All of which two gentle, humorful souls — both of them former tax accountants — sorted out painlessly over the course of two hours at our local community center. We had our refund within three weeks. Highly recommended to readers of this blog. (There’s still time to sign up for this in your area.)

We had our taxes for 2023 done — for free — by local volunteers in the AARP TaxAide program. Seniors helping seniors. Normally I do my own taxes, and Anna hers, separately. But this year we needed to file jointly, for the first time, in order to navigate the one-time tax situation of the sale of our Staten Island house. All of which two gentle, humorful souls — both of them former tax accountants — sorted out painlessly over the course of two hours at our local community center. We had our refund within three weeks. Highly recommended to readers of this blog. (There’s still time to sign up for this in your area.)

•

Wetware Maintenance

Between the pandemic, the sale of the house, and the move up here, my Medicare Advantage plan changed several times. And I somehow managed to forget to establish a new primary care physician plus relevant specialists. Long story short, until March of this year I hadn’t seen a doctor since 2018. (I had my last visit with my long-term Manhattan dentist in summer 2022, and saw a new one up here in fall 2023, so the lag time re my teeth wasn’t so substantial.)

I don’t take any prescription meds, have no serious ailments, and feel basically okay. Nonetheless, at my age this avoidance of wetware maintenance wasn’t smart. Anyhow, having now turned 80, with work still to do and planning to get to 100 with all my f-a-c-u-l-t-i-e-s intact, I took myself in hand and decided to set up a new network of physicians and achieve a full assessment of my current health — as the song says, to see what condition my conditions are in.

I don’t take any prescription meds, have no serious ailments, and feel basically okay. Nonetheless, at my age this avoidance of wetware maintenance wasn’t smart. Anyhow, having now turned 80, with work still to do and planning to get to 100 with all my f-a-c-u-l-t-i-e-s intact, I took myself in hand and decided to set up a new network of physicians and achieve a full assessment of my current health — as the song says, to see what condition my conditions are in.

My latest MA plan started in January, so I’ve now identified and met with an excellent new PCP — both English- and Chinese-speaking, for easy communication with Anna in any emergency. We started with an office visit, which revealed that my previously borderline high blood pressure has risen to the “severe” level, requiring medication and some lifestyle adjustments (mostly dietary). Bloodwork followed, an analysis of which — translated into lay terms — awaits.

•

This post sponsored by a donation from Carlyle T.

•

Special offer: If you want me to either continue pursuing a particular subject or give you a break and (for one post) write on a topic — my choice — other than the current main story, make a donation of $50 via the PayPal widget below, indicating your preference in a note accompanying your donation. I’ll credit you as that new post’s sponsor, and link to a website of your choosing.

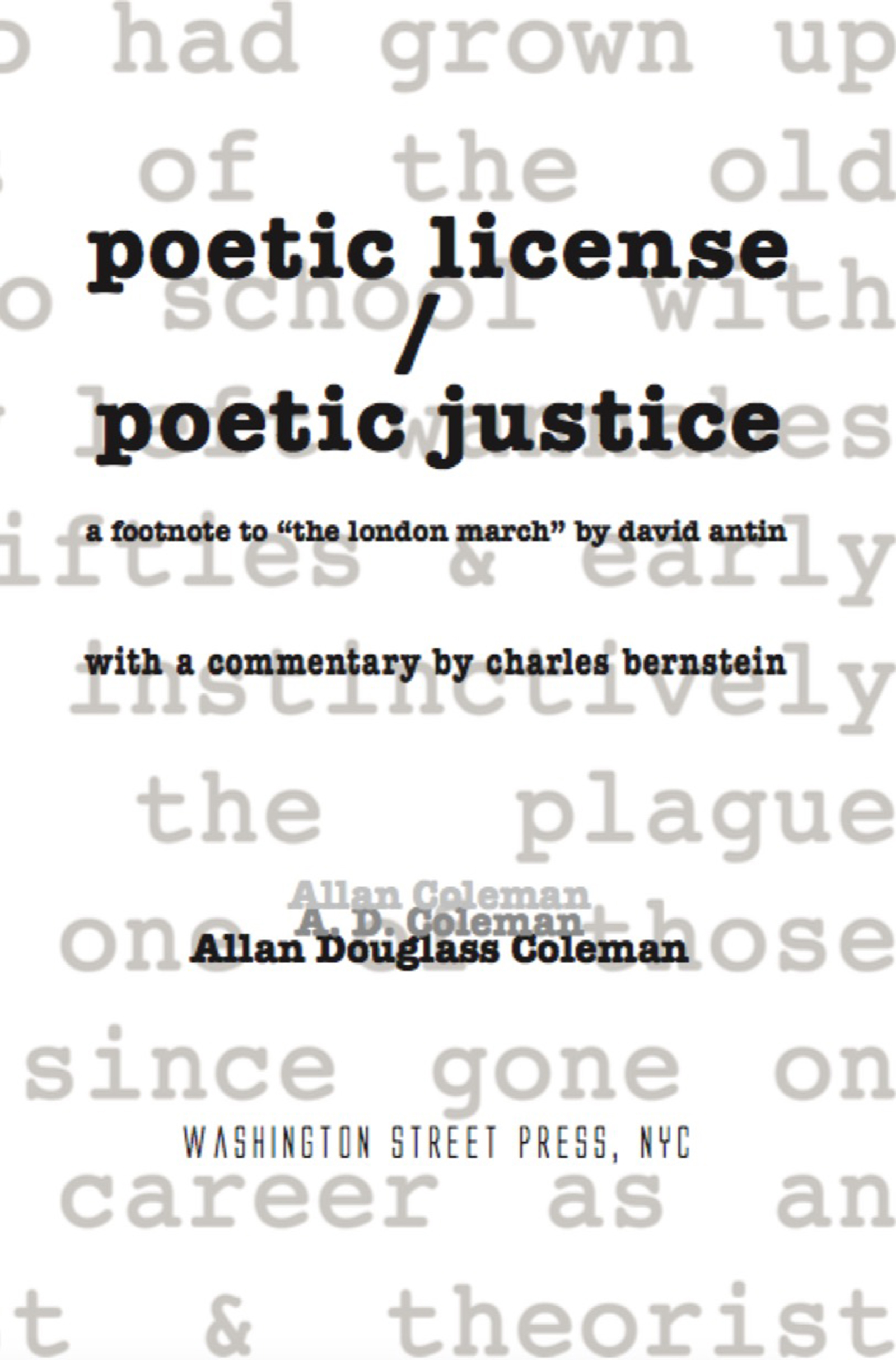

And, as a bonus, I’ll send you a signed copy of my new book, poetic license / poetic justice — published under my full name, Allan Douglass Coleman, which I use for my creative writing.

Nevertheless, They Persisted (1)

I have served as an expert witness in Graham v. Prince et al (15‑cv‑10160) and McNatt v. Prince et al (16‑cv‑08896), providing my services pro bono. This included drafting a written statement on behalf of the plaintiffs — responding to a specific set of questions posed by the lawyers for Graham and McNatt — and sitting through an extensive deposition by Prince’s high-priced lawyers. […]